PRECONDITIONS: TENANT ADMIN CONSENT

✅Companion and related Transcription features require Tenant Administrator consent. This consent needs to be granted once in the Data Privacy Tenant Settings > Artificial Intelligence section.

Nimbus Companion allows you to configure AI-driven features that help improve your overall service performance, for example assisting your service users with:

- … AI-driven Transcription and post-call Summarization functions.

- … Live Captioning of spoken dialogue.

- … with automated Tags and Codes suggestions based on the context detected during the call.

Speech Recognizers

✅All Transcription or Live Captioning features described on this page requires prior setup of a Speech Recognizer in the Nimbus Configuration.

Speech recognizers are preconfigured Nimbus (Administration) items that point to a 3rd party service for voice processing. A Speech Recognizer is required to specify the language and engine used by any transcription features mentioned

DATA STORAGE SETTING

Note that there are data storage toggles for Transcription and Summarization data.

- While enabled (Default): Data is stored or 30 days1. Afterwards, any data not stored (e.g. via Power Automate Connector) will be discarded.

- While disabled: Data will be cached and (when optionally enabled) shown in the Nimbus UI for 1 hour1. Caching is done to allow retrieval and storage of the transcription data via Nimbus Power Automate Connector.

1🔎For more details on data storage and retention, refer to the Nimbus Security Whitepapers in the Documents section

Companion Features

Please use the tabs below to navigate:

Transcription

Transcription

INC Transcription Preconditions

PRECONDITIONS

✅Related Admin Use Case: Refer to Use Case - Setting Up Transcription for detailed step-by-step instructions.

Nimbus service and user licensing

🔎Transcription features have service and user requirements. These requirements apply for either mid-session Live Caption or post-session Transcription / Summarization features.

|

Service requirements Enterprise Routing Contact Center |

|

|

User requirements Companion |

|

Speech Services

🔎Features described in the following use “Speech Services” provided by 3rd party vendors. To offer our customers both convenience and flexibility, you may pick between a Nimbus-native implementation and Azure speech services.

INC Speech Recognizer service comparison

| Nimbus AI Services | Azure AI Services | |

|---|---|---|

| Benefits |

|

|

| Challenges |

|

|

| Setup |

|

|

Optional: Power Automate connector integration

🔎Optional step: The Nimbus Power Automate Connector can extract Transcription/Summarization data for implementing additional use cases. To achieve this, the following steps need to be performed by an administrator:

- You need to set up a Power Automate flow that uses the “Companion” Trigger Event to react to any ongoing transcription session event.

- You then require the “Companion” Flow Action to capture the data for any further processing.

💡Some Use Case examples from our Knowledge Base:

INC Azure Billing Transcription

AZURE BILLING

The usage of the Transcription feature will cause additional monthly ACS costs. The costs are determined by Microsoft. Also see https://azure.microsoft.com/en-us/pricing/details/cognitive-services/speech-services/.

- Before enabling the Transcription feature, get in touch with your Luware Customer Success specialist to discuss terms, usage scenarios and necessary setup procedures.

- Please note that Nimbus and Transcription support does not cover pricing discussions or make recommendations based on Microsoft Azure infrastructure.

When active, the Transcription feature transcribes call contents using Voice-to-Text capabilities. It improves quality assurance and increases the efficiency of call processing, for example making it easier to summarize past customer interactions and possibly transfer them to a CRM system for easier search and later analysis.

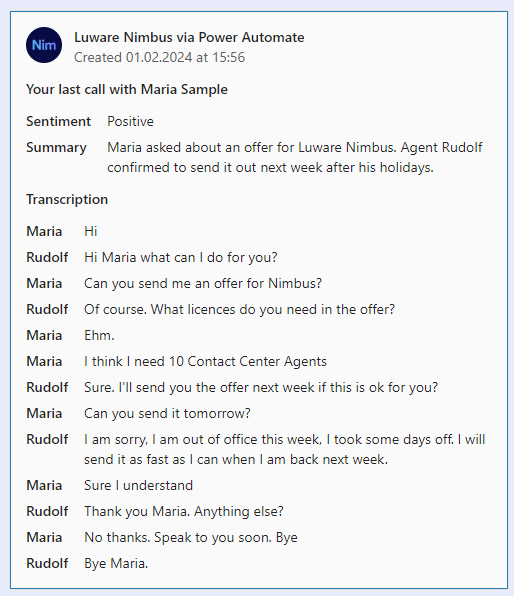

Once a call has ended, a Voice Transcription is generated in the background, by leveraging the Nimbus Power Automate Connector > “Companion” Trigger Events and Flow Actions. You can then forward the generated transcription to any target, e.g. by wrapping it into an Adaptive Card as Teams chat message or via Email.

|

|

Optionally you can also show the transcription in My Sessions, allowing users to have a direct written record of previous conversations with customers.

Summarization

Summarization

Summarization

✅ PRECONDITIONS

Companion - Summarization is an optional feature as part of Transcription:

- The Transcription feature must be enabled on the Companion Service Settings, as the Summarization is generated from it.

- Companion Service Settings also steer the individual feature availability to users, as well as the data storage retention time.

- Each user accessing the feature requires a Companion License, applied via General User Settings.

The Nimbus Companion integrates AI summarization as part of a broader suite of Transcription features, aimed at improving service performance and agent efficiency. During a call - when Live Captioning is enabled - Nimbus transcribes the conversation in real time. After the call, the transcript is processed by an AI service to generate a summary with the following aspects:

| Aspect | Description |

|---|---|

| Title | A title generated from Call Direction, Service, and User that accepted the task. |

| Issue | Shortened problem description by the Customer. |

| Resolution | Summary of how the issue was resolved. |

| Recap | A brief paragraph summarizing the interaction. |

| Narrative | Detailed call notes or chat summary of the entire Customer/User Interaction. |

Summarization Outputs

Via My Sessions: Summarization outputs can be presented to the agent via My Sessions > Companion widget.

💡All aspects shown within the Companion Widget > Summarization can be hovered over and copied.

✅ Precondition: The “Companion” widget itself needs to be enabled in the Extensions Service Settings in order to be shown to users.

Via Nimbus Power Automate Connector: Administrators also have the possibility to flexibly retrieve and store Summarization outputs using Companion Flow Actions and the User/Service SessionID. This can be useful to keep a record of calls, e.g. within a CRM or long-term data storage and evaluation platform.

Known Limitations

Summarization relies on the Transcription feature and therefore shares the same design limitations.

INC Transcription Limitations

KNOWN TRANSCRIPTION & SUMMARIZATION LIMITATIONS

💡We are actively working on further improvements to the following limitations:

- Transcription data is not part of service/user transfers. Data is kept within the current customer/user session.

- Transcription > Summarization features are still in preview. The Summarization is only available on My Sessions.

Troubleshooting FAQ

INC Transcription Troubleshooting

Not seeing any transcripts in the Transcript widget can have several causes. The following table lists error messages and explains why they are shown.

| Message shown | 🤔 Why do I see the message? |

|---|---|

| No transcription is available for this interaction. |

There was no transcription generated for the selected conversation. This could happen …

|

|

No transcription is available for this interaction OR Task was not accepted. |

|

| Transcription not available for this session. No consent granted. |

|

| User Transcription License is missing. |

|

BY DESIGN

💡The following points are not limitations.

-

Feature availability:

- Transcription is a prerequisite to Live Captioning and Summarization. A working Speech Recognizer must be configured to use all features.

- Transcription relies on external services and APIs. When the feature is disabled or unavailable (e.g. throttling, settings or Microsoft service impediments) info messages are shown in the frontend UI widget. Nimbus task handling and user-customer interactions themselves are not affected by this and will continue normally.

-

Transcription scope:

- 3rd-party participants: Third parties and call conference attendees are not part of the content transcribed. Only the transcription between the Nimbus user and the customer is kept.

- Supervision - As long as Supervisors remain in Listen or Whisper mode during a conversation, their voice is not being transcribed. Only during ”Barge In" they are part of the conversation and transcription is active.

- Summarization items generated from the Transcription might be missing when there is not enough data to draw from.

Codes Suggestion

Codes Suggestion

✅ Precondition: Transcription is required to be enabled and a Transcriber set in order to use Codes Suggestion.

The Codes Suggestion feature provides you suggestions in My Sessions after a call is finished. If you haven't added codes to the session yet, Companion automatically suggests them. In case you have already added your codes, you need to click on the suggest icon to see and apply code suggestions.

💡When configuring Codes in your Configuration, you can add the context related to this code in the field to support Nimbus Companion in suggesting codes.

Tags Suggestion

Tags Suggestion

✅ Precondition: Transcription is required to be enabled and a Transcriber set in order to use Tags Suggestion.

The Tags Suggestion feature provides you up to 5 tag suggestions in My Sessions from the list of already assigned tags (to service sessions). After a call is finished and you haven't added tags to the session yet, tags suggestions are shown. You can either select specific tags or apply them all. If you have already selected your own tags before, they don't get overwritten – in this case, you need to click on the suggest icon to see and apply tags suggestions.

Virtual Assistants

Live Captioning

✅ Precondition: Transcription is required to be enabled in order to use Live Caption.

INC Public Preview Beta Feature

This feature is in PREVIEW and may not yet be available to all customers. Functionality, scope and design may change considerably.

The Live Caption feature converts ongoing call participant voices directly into text, using Voice-to-Text capabilities. Contents are displayed live on the My Sessions page.

Follow-Up Actions

For Service Owners

✅Enabling the feature visibility:

- For Transcription and Summarization features to be visible to your users, the “Companion” widget must be enabled via Extensions Service Settings > Widgets. Of course you can opt to not show these features, while Companion features still run in the background.

💡Please note that Transcription-related features only apply for new incoming sessions, not retroactively on past sessions. - Once enabled, you can inform your Service team, so they can pay attention in their My Sessions view accordingly.

For Administrators

✅Power Automate alternative for Companion data: You can also leverage the Nimbus Power Automate Connector by using “Companion” related Trigger Events and Flow Actions, as described in our example Use Case - Analyzing a Transcript. While adding complexity in configuration, the advantage is that you have more fine-tuned control over the transcription data, allowing you to process or store it externally, or opt to showcase it to additional users via the use of Adaptive Cards.

Known Limitations

INC Transcription Limitations

KNOWN TRANSCRIPTION & SUMMARIZATION LIMITATIONS

💡We are actively working on further improvements to the following limitations:

- Transcription data is not part of service/user transfers. Data is kept within the current customer/user session.

- Transcription > Summarization features are still in preview. The Summarization is only available on My Sessions.

Troubleshooting FAQ

INC Transcription Troubleshooting

Not seeing any transcripts in the Transcript widget can have several causes. The following table lists error messages and explains why they are shown.

| Message shown | 🤔 Why do I see the message? |

|---|---|

| No transcription is available for this interaction. |

There was no transcription generated for the selected conversation. This could happen …

|

|

No transcription is available for this interaction OR Task was not accepted. |

|

| Transcription not available for this session. No consent granted. |

|

| User Transcription License is missing. |

|

BY DESIGN

💡The following points are not limitations.

-

Feature availability:

- Transcription is a prerequisite to Live Captioning and Summarization. A working Speech Recognizer must be configured to use all features.

- Transcription relies on external services and APIs. When the feature is disabled or unavailable (e.g. throttling, settings or Microsoft service impediments) info messages are shown in the frontend UI widget. Nimbus task handling and user-customer interactions themselves are not affected by this and will continue normally.

-

Transcription scope:

- 3rd-party participants: Third parties and call conference attendees are not part of the content transcribed. Only the transcription between the Nimbus user and the customer is kept.

- Supervision - As long as Supervisors remain in Listen or Whisper mode during a conversation, their voice is not being transcribed. Only during ”Barge In" they are part of the conversation and transcription is active.

- Summarization items generated from the Transcription might be missing when there is not enough data to draw from.